IN THIS EDITION

1. Quotations to Open On

2. Feature Article

2.1 Accelerating Model Development and Consistency: The SAIC Digital Engineering Validation Tool by Michael J. Vinarcik

2.2 Overview of Recent Systems Engineering Guidance Provided by the National Aeronautics and Space Administration (USA) by Ralph Young

3. Notable Articles

3.1 Leading the Transformation of Model-based Engineering by Al Hoheb

3.2 Three Ways to Simplify Complex Projects by Dave Wakeman

3.3 The Test Like You Fly (TLYF) Process – Creating Operationally Realistic Tests and finding Mission Critical Faults Before It’s Too Late by Julie White

4. Systems Engineering News

4.1 INCOSE’s Chapter Circle Awards for 2019 (Awarded in 2020)

4.2 Relying on Innovation – Site Reliability Engineering (SRE)

4.3 National Science Foundation (USA) Invests $104 Million to Launch Four New Engineering Research Centers

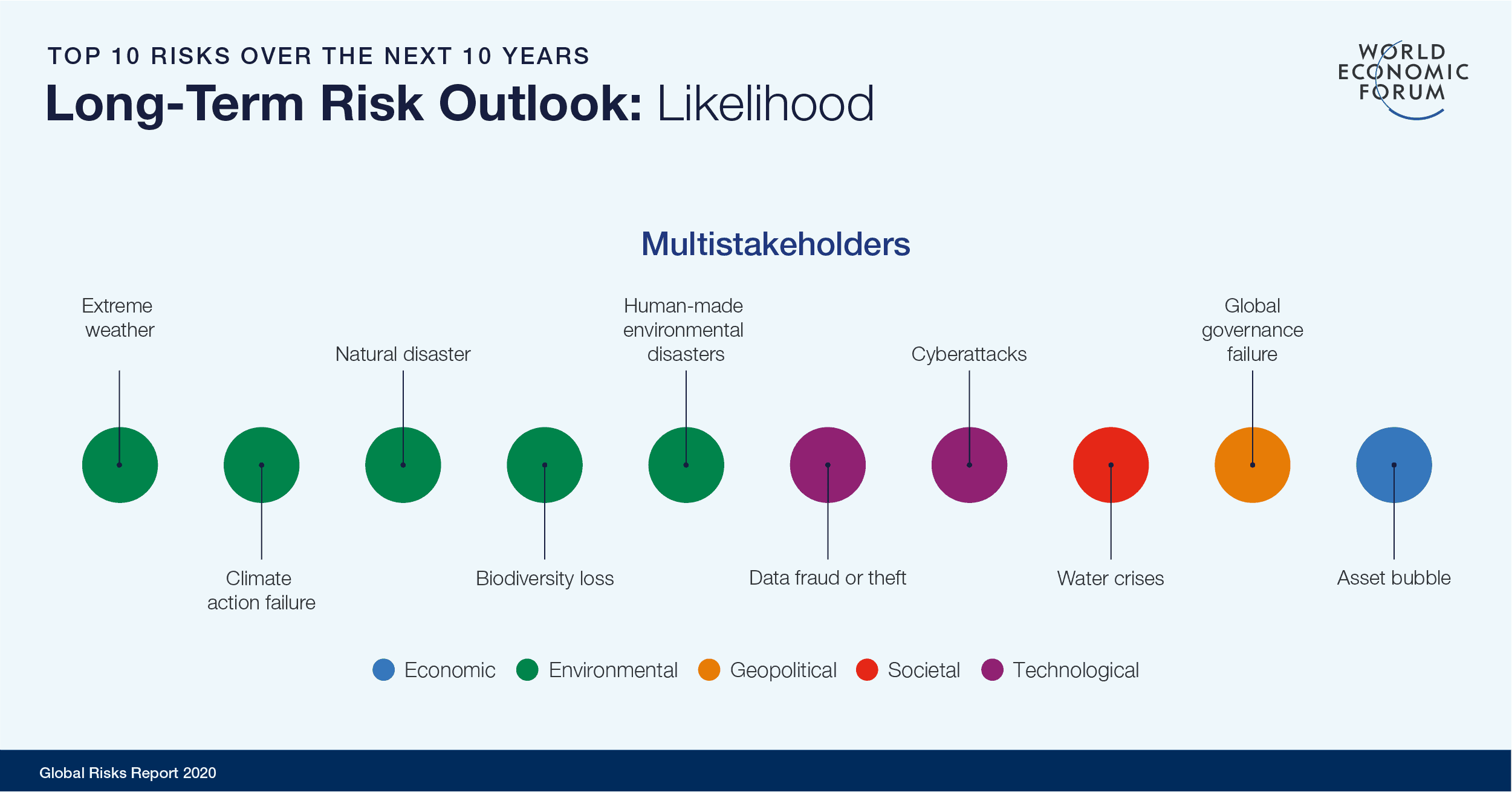

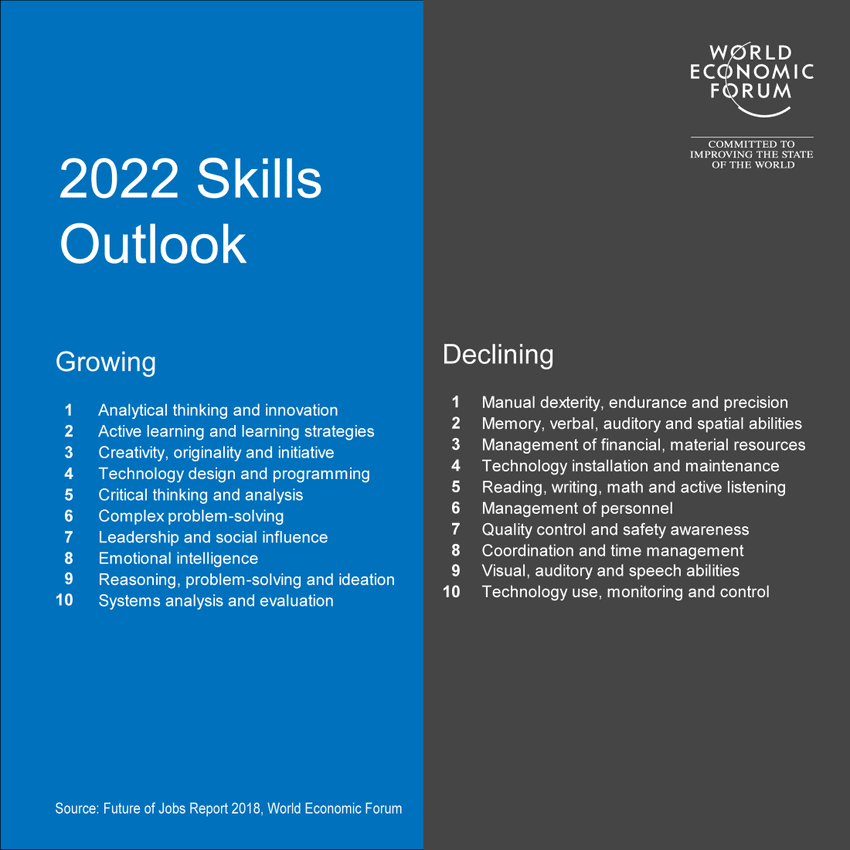

4.4 These Four Skills can make the World Better after COVID-19

4.5 An INCOSE Team is Addressing Heuristics

4.6 IT Modernization is Evolving. It’s Time to Take another Look

4.7 NIST Publishes Proposed Principles for “Explainable” AI Systems

4.8 INCOSE Western States Regional Conference

5. Featured Organizations

5.1 IEEE SMC Technical Committee on Model-Based Systems Engineering (TC-MBSE)

5.2 Establishing a Systems Engineering Organization

6. News on Software Tools Supporting Systems Engineering

6.1 Siemens and IBM Deliver Service Lifecycle Management Solution

6.2 SAIC Digital Engineering Validation Tool

7. Systems Engineering Publications

7.1 Data-Driven Science and Engineering: Machine Learning, Dynamical Systems, and Control

7.2 INCOSE’s Leading Indicators of Systems Engineering Effectiveness Guide

7.3 Systems Analysis and Design

7.4. System Design Interview – An Insider’s Guide (2nd Ed.): Step by Step Guide, Tips, and 15 System Design Interview Questions with Detailed Solutions

7.5 Systems Engineering, Systems Thinking, and Learning: A Case Study in Space Industry

7.6 An Introduction to Complex Systems Science and Its Applications

7.7 System Dynamics Review Volume 36, Number 2

7.8 Performance-Based Earned Value

8. Education and Academia

8.1 Leading Engineering & Computer Science Programs 2020

8.2 New Chair of Washington State University Encourages Discoveries in Biological Systems Engineering

8.3 Engineering Hands-on Experience in a Time of Remote Learning

9. Some Systems Engineering-Relevant Websites

10. Standards and Guides

10.1 IEEE Software & Systems Engineering Standards Committee

10.2 Application of Systems Engineering Standards

11. Some Definitions to Close On

11.1 Enterprise architecture

11.2 Heuristic

11.3 Reliability

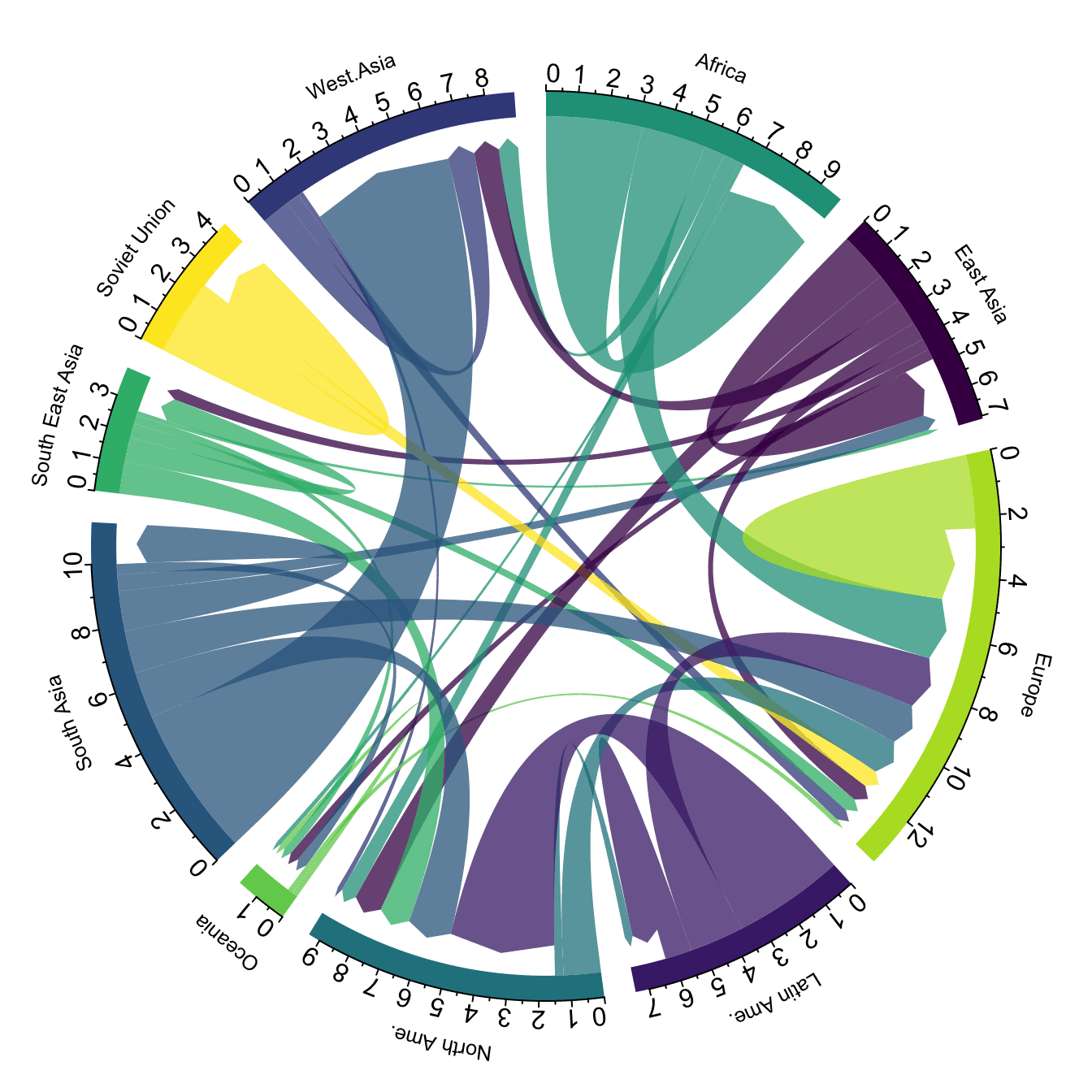

11.4 Chord Diagram

12. Conferences and Meetings

12.1 39th International Conference on Conceptual Modeling November 3-6, 2020, Vienna, Austria (Hosted Virtually)

13. PPI and CTI News

13.1 CTI Successfully Conducts First Ever INCOSE SEP Exam Prep Workshop in India

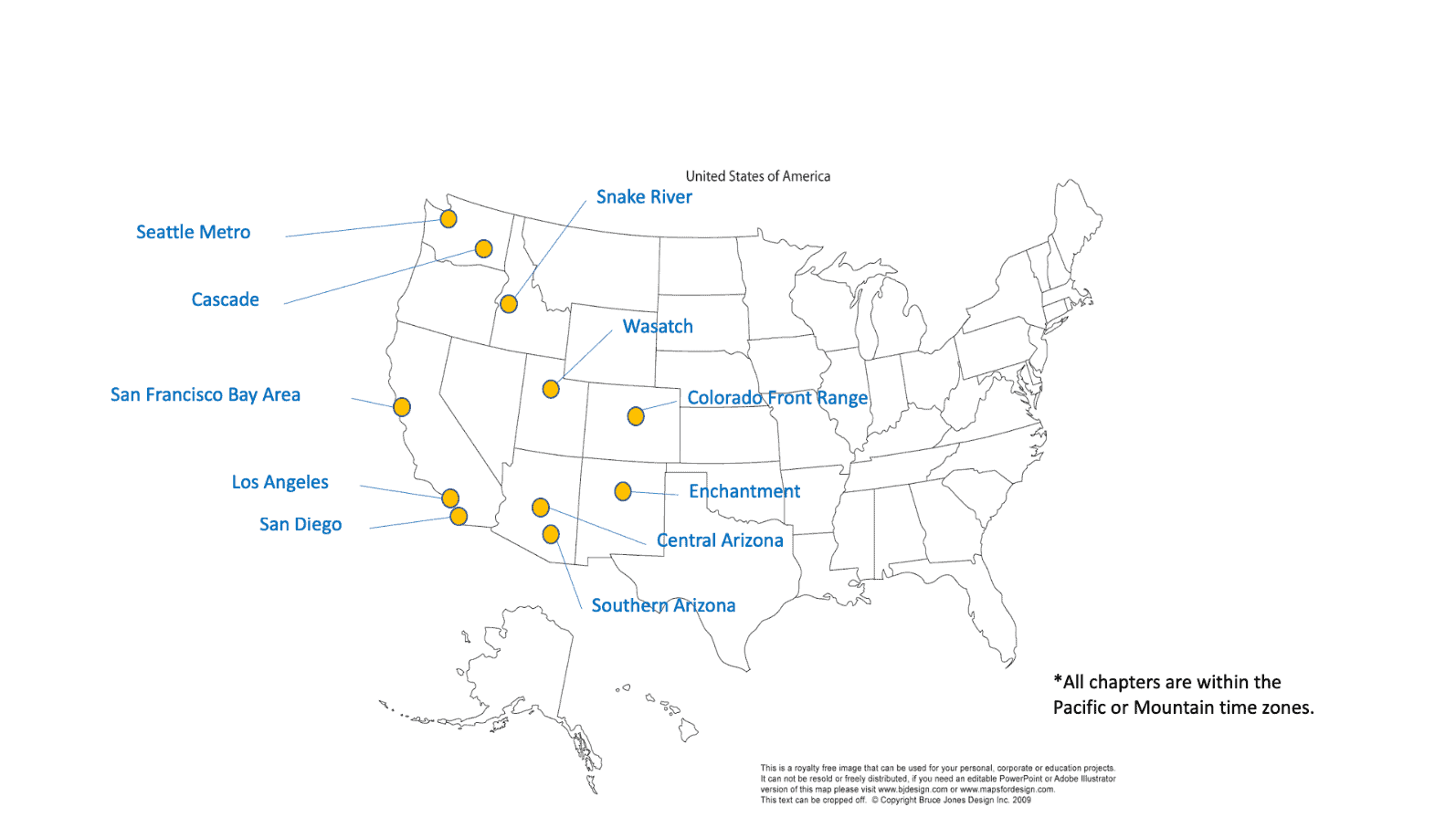

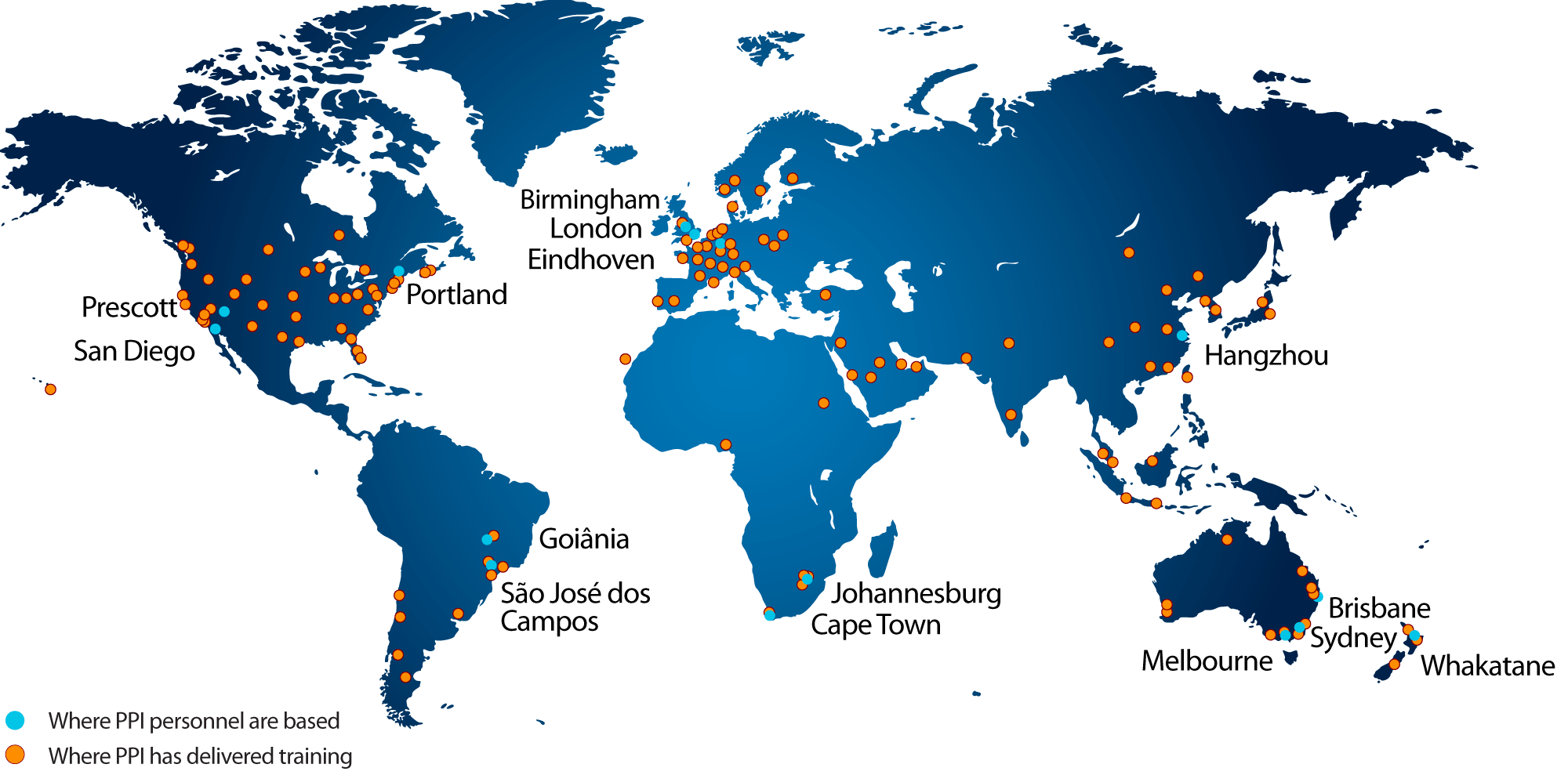

13.2 An Update to the PPI Map

14. PPI and CTI Events

15. Upcoming PPI Participation in Professional Conferences

“The principles of successfully engineering systems also apply, with one exception, to the engineering of unitary objects not constructed of interacting parts, what we can call non-systems, for example a coin created by forming a material into a shape. The one exception relates to the use of logical design.”

“You cannot create experience. You need to undergo it.”

Albert Camus

“Architecture is design but not all design is architecture.”

Grady Booch

2.1 Accelerating Model Development and

Consistency: The SAIC Digital Engineering Validation Tool

by

Michael J. Vinarcik, P.E., FESD

Chief Systems Engineer

Solutions and Technology Group

SAIC

Introduction

The transformation of systems engineering from Document-Intensive Systems Engineering (DISE) to Model-Based Systems Engineering (MBSE) has been underway for some time. The creation of purpose-built languages (notably SysML, the System Modeling Language), modeling tools, and widely-published strategies (such as the U.S. Department of Defense Digital Engineering Strategy [1]) have fostered a growing interest in harnessing the power of descriptive and executable models to improve the rigor and speed of systems engineering efforts.

However, system modeling is a skill-based discipline in which the outcome of an effort is highly dependent upon the relative abilities of the individuals responsible. Demand for competent modelers continues to grow and far exceeds supply (a query of Indeed.com using the keyword “SysML” returned 620 job openings and “MBSE” returned 922). Many modelers are focused on simply replicating DISE artifacts, effectively using modeling tools as if they were merely drawing tools. Others understand the implications of modeling but fail to review their work and create models littered with errors and omissions. Skilled modelers can disagree upon methodology and apply style guides unevenly, and many references are focused on superficial administrivia (color-coding and number of elements on a diagram) instead of teaching the need to ensure consistency and completeness.

System Modeling as a Craft [1]

Note: This section is reproduced from Treadstone: A Process for Improving Modeling Prowess Using Validation Rules

Model-Based Systems Engineering (MBSE) is a skill-based discipline that shares many similarities with software development. Crafting descriptive system models in the System Modeling Language (SysML) is like writing code; models are an expression of intent. Models are also path dependent, in that the experience of the development team shapes the outcome. Although good modeling practices and styles can be recognized and applied, alternate expressions of the same solution (and the existence of multiple solutions in a design set) imply that, in practice, there is not one, perfect, absolutely correct and optimal representation of a system. Even if such a construct existed in theory (a matter left for others to debate), the effort to seek it and develop a model in conformance to it may exceed the benefits of a satisficing model available rapidly at a lower development cost.

Hillary Sillitto suggests that “Architecting a system is the activity of creating a system architecture with the aim that the system to be built will do the job it was meant to do – in other words “will be fit for purpose”. Architecting defines what to design, while design defines what to build.” [2]

There is significant ongoing work in applying patterns and machine learning to system models (because the rigor of a purpose-built language enables visibility and analysis techniques impossible with natural language processing). There is promise in these efforts and they will likely enable measurable improvements in model quality. However, there is a need to execute effective systems architecture now, and that means that for the foreseeable future the capabilities of the humans-in-the-loop will dominate the outcomes of each modeling effort.

Merriam-Webster defines craft as “an occupation or trade requiring manual dexterity or artistic skill.” [3] Ryan Noguchi of Aerospace Corporation states: “System modeling is not a conceptually simple or straightforward task. It is like computer programming in many respects, requires some similar skill sets, and involves similar design tradeoffs…The conceptual model and the organization of models into modules can significantly impact their usability, consistency, and maintainability. It is important to have experienced architects familiar with both the problem space and the capabilities of the tools to lead that effort. Furthermore, building models using these tools is not always straightforward, and like software programming, is a skill that not everyone can learn equally well.” [4] In essence, it is a craft.

In Apprenticeship Patterns, Hoover and Oshineye describe the values of craftsmanship as including (emphasis added by the author):

- An attachment to… a “growth mindset.” This entails a belief that you can be better and everything can be improved if you’re prepared to work at it…

- A need to always be adapting and changing based on the feedback you get from the world around you…

- A desire to be pragmatic rather than dogmatic. This involves a willingness to trade off theoretical purity or future perfection in favor of getting things done today.

- A belief that it is better to share what we know than to create scarcity by hoarding it…

- A willingness to experiment and be proven wrong…

- A dedication to what psychologists call an internal locus of control. [https://en.wikipedia.org/wiki/Locus_of_control]. This involves taking control of and responsibility for our destinies rather than just waiting for someone else to give us the answers.

- A focus on individuals rather than groups…

- A commitment to inclusiveness…

- We are skill-centric rather than process-centric. For us, it is more important to be highly skilled than to be using the “right” process…This idea suggests that no process or tool is going to make everyone equally successful. Even though we can all improve, there will always be discrepancies in our skill levels.

- A strong preference for what Etienne Wenger calls “situated learning.” [http://wiki.c2.com/?LegitimatePeripheralParticipation]. Its essence is that the best way to learn is to be in the same room with people who are trying to achieve some goal using the skills you wish to learn.” [5]

The Promise of Automated Validation

The software community has leveraged automated code reviews for decades; the system modeling community has been somewhat slower to adopt this practice (or if individual efforts have, they do not share the information in order to maintain a competitive advantage). Reviews tend to focus on scrutinizing diagrams rather than ensuring the underlying elements, properties, and relationship are complete, accurate, and appropriate. Manual reviews are not scalable, since as models grow in size, the content to be reviewed grows while the supply of senior modelers capable of the rigorous reviews remains relatively constant.

Quality check tables, matrices, and other derived work products can be created to help with these audits but they still require humans-in-the-loop to asses them. Fortunately, SysML modeling tools do support the creation of automated validation rules. These rules, once defined, can be applied to all or part of a model. The rules execute rapidly, typically in seconds or minutes, and identify each violation. However, to successfully create a validation ruleset requires:

- Articulation of the desired style and modeling practices;

- Creation of robust test logic to implement each rule; and

- Testing of the rules to eliminate false positives and negatives that would undermine confidence in the rules

Veejay Gorospe’s work at the 2019 MBSE Cyber Experience Symposium called the author’s attention to the possibilities that harnessing the MagicDraw validation engine represents. Because of his familiarity with the Structured Expression language native to the No Magic toolsuite, the author began experimenting with validation rules in the fall of 2019 to teach system modeling and grade student models (see [1]). The initial validation rules were transferred to SAIC’s TeamWork Cloud environment where they were subsequently matured. Input and best practices from multiple senior modelers in SAIC’s Solutions and Technology Group were codified in the rules; the rules were then tested by applying them to student models as well as by tasking junior modelers to create an example model that conformed to the rules. This allowed the ruleset to grow rapidly and to be debugged exhaustively in multiple environments.

SAIC also developed a model-based style guide to illustrate the rules and the rationale for each as well as porting the rules to Rhapsody. Videos that illustrate key points (such as the customized “flow set” that enables the linking of deeply nested ports and the full synchronization of behavioral and structural elements) are also included. The v1.6 release, published in August 2020, also includes a classification profile that allows a relationship-based tagging of model elements to explicitly assign security and data rights.

An Important Milestone for the Modeling Community

SAIC’s Solutions and Technology Group has released these customizations and rules to the modeling community free of charge. This content was not free to develop (its development involved numerous senior and junior modelers over an extended period of time); however, SAIC believes that sharing this native content and allowing its reuse (with attribution) will facilitate fruitful discussions within the modeling community, facilitate the spread of good modeling practices, enable peer review from other senior modelers, and demonstrate capabilities that may be added to future tool or language releases.

For example, the customizations that enable seamless navigation between behavioral and structural elements also force explicit linking of these often disparate (and out-of-synch) views of system intent.

Feedback from the modeling community has been positive, with a general consensus emerging that this is the largest, most complete ruleset ever publicly released. Some minor bugs have been reported and fruitful discussions (often related to some of the more controversial aspects of the modeling style) have been initiated.

These rules also have considerable value in fostering the development of junior modelers. As the Treadstone paper illustrates, the timely feedback provided by the validation rules effectively mentors novice modelers and shapes their modeling style without consuming ongoing labor hours of senior staff. This frees up experienced modelers to focus on high-value consultation and method development instead of being bogged down with trivial style guide reviews and basic methodology discussions. The use of the rules also provides positive feedback and confidence-building; when novice modelers create models that pass validation, they receive tangible evidence that their skill is developing. This emboldens them to continue deepening their understanding of the language, tool, and methodology

SAIC’s Solutions and Technology Group believes that modeling is a craft (as described above) and is proud to contribute to its global development; we encourage other leading modeling organizations to join us in promoting the use of these rules as a means to develop the modeling talent needed to complete the transformation from DISE to MBSE.

SAIC Digital Engineering (DE) Validation Tool v1.6

The August 2020 release of the validation tool includes:

- SAIC DE Profile for MagicDraw/Cameo Modeling tools: Contains customizations and validation rules.

- SAIC DE Style Guide: Model-based style guide with details about execution and rationale.

- SAIC DE System Model Example: Example model built using the profile, rules, and style guide. The subject of the model is NASA’s Ranger lunar probe from the 1960s.

- SAIC DE Profile for Rhapsody: A subset of our validation rules ported to IBM’s Rhapsody modeling tool.

- SAIC Customization Profile: Contains customizations to facilitate tracking of classification and data rights on a per-element basis.

- How-to Videos:

- Introduction

- Linking Flows

- Flow Sets

- Classification Profile Usage

The rules provided in this release are listed in the Appendix; the examination of the style guide and rules in their native model format is highly encouraged. No Magic provides a free reader version of its modeling tool that may be used to examine these models.

The content of this release is ITAR-approved and may be freely redistributed as licensed in the models.

Conclusion

SAIC encourages the widespread review and/or usage of these validation rules and customizations. They represent a significant investment of time, effort, and resources, and provide any modeling effort with access to a ready-made, proven modeling style that delivers effectiveness, efficiency, and elegance. Individual rules may be included (or omitted) to tailor the provided ruleset for an organization or program’s needs.

SAIC also welcomes feedback and suggestions for improvements and encourages other organizations to share their rules and approach publicly (with native models to facilitate scrutiny).

Contact: DigitalEngineering@saic.com

https://www.saic.com/digital-engineering-validation-tool

References

| [1] | M. J. Vinarcik, “Treadstone: A Process for Improving Modeling Prowess Using Validation Rules,” in American Society for Engineering Education, 2020. |

| [2] | H. Sillitto, Architecting Systems: Concepts, Principles and Practice, London: College Publications, 2014. |

| [3] | Merriam-Webster, [Online]. Available: https://www.merriam-webster.com/dictionary/craft. [Accessed 2 February 2020]. |

| [4] | R. A. Noguchi, “Lessons Learned and Recommended Best Practices from Model-Based Systems Engineering (MBSE) Pilot Projects,” Aerospace Corporation, 2016. |

| [5] | D. H. Hoover and A. Oshineye, Apprenticeship Patterns: Guidance for the Aspiring Software Craftsman, Boston: O’Reilly MEdia, 2009. |

Appendix: SAIC Digital Engineering Validation Rules v1.6

| Rule | Validated Element | Severity | Rule |

|---|---|---|---|

| ACCEPTEVENTMATCH | AcceptEventAction | error | The signal triggering an accept event action must match the signal typing its output pin (same signal or one of its specific classifiers). |

| ACCEPTEVENTOUTPUT | AcceptEventAction | error | Accept Events must own an output pin. If you are modeling a signal that triggers a state transition, associate the object flow with an item flow and realize the transition. |

| ACCEPTEVENTPORTMATCH | AcceptEventAction | error | The assigned and inferred ports (via item flow realization) must match. |

| ACCEPTEVENTTIMEEVENTTRIGGER | AcceptEventAction | error | Accept events triggered by time events must have WHEN defined. |

| ACCEPTOUTGOING | AcceptEventAction | error | If an Accept Event outgoing object flow is realized by an item flow or flow set, the signal that triggers the accept event must be conveyed by the item flows or flow set. |

| ACTIVITYACTIONSTM | Activity | error | All activities owned by state machines must have at least one action node. |

| ACTIVITYDOCUMENTATION | Activity | error | All activities must have documentation; activities that are methods for operations or are classifier behaviors for use cases are exempt. |

| ACTIVITYEDGEGUARD | ActivityEdge | error | All control and object flows exiting a decision node must have guards defined (control flows may have probabilities defined instead). |

| ACTIVITYEDGEMISMATCH | ControlNode | error | Flows into and out of a control node (join, fork, merge, or decision) must be of the same type (object or control). |

| ACTIVITYFINAL | Activity | error | Activities that own diagrams must own one final node and it must have one incoming control flow. |

| ACTIVITYINITIAL | Activity | error | Activities that own diagrams must own one initial node and it must have one outgoing control flow. |

| ACTIVITYLEAF | Activity | error | An activity may not own diagrams or operations if its “Is Leaf” attribute is set to true. |

| ACTIVITYLEVEL | Activity | error | Activities may not call operations owned by elements with both logical and physical stereotypes applied (review the blocks that own the operations called by this activity and ensure they are all logical or all physical). |

| ACTIVITYLOOP | Activity | error | This activity is part of a loop (a nested call behavior within its decomposition calls a behavior upstream in the activity decomposition). |

| ACTIVITYNAME | Activity | error | Activities must be named. |

| ACTIVITYOWNS | Activity | error | Activities must own at least one diagram or operation. If it will not be further decomposed, set its “Leaf” attribute to true. |

| ACTIVITYPARAMETERFLOW | ActivityParameterNode | error | All activity parameter nodes must have incoming or outgoing object flows. |

| ACTIVITYPARAMETERSTM | Activity | error | State Machine Entry, Do, Exit, and Transition activities may not have parameters. |

| ACTORASSOCIATION | Association | error | Actors may not be associated with other actors. |

| ACTORDOCUMENTATION | Actor | error | All Actors must have documentation. |

| ACTORNAME | Actor | error | All Actors must have names. |

| ACTORUSECASE | Actor | error | All use case elements must be associated with at least one use case or be specialized by other actors. |

| ACTPARTYPE | ActivityParameterNode | error | All activity parameter nodes must be typed by signals or value types. |

| ACTREALIZATION | Actor | error | All Actors and other use case elements must be realized by at least one part property in the structure tree of a system context block. Environmental effects may be realized by value properties in the structure tree of a system context block. Actors that are generalizations of other actors are exempt from this rule. |

| ALLOCATIONPROHIBIT | Allocate [Abstraction] | error | Allocations are prohibited; use realization (between levels of abstraction) or satisfy (between requirements and other model elements). |

| ANNOTATEDELEMENTS | Problem [Comment]

Rationale [Comment] |

info | Problem and rationale elements should annotate at least one model element. |

| ARTIFACTNAME | source content [Artifact] | error | All artifact elements must be named. |

| BLOCKNAME | Block [Class] | error | Blocks must be named. |

| BLOCKUSECASE | Block [Class] | error | Blocks may not be the subject of use cases or associated with them. Use case elements and realize them with blocks. |

| BUFFERFLOW | CentralBufferNode | error | Buffers and data stores must have at least one object flow (incoming or outgoing). |

| CALLBEHAVIORBEHAVIOR | CallBehaviorAction | error | Call behavior actions must have the called behavior specified. |

| CALLBEHAVIORSELF | CallBehaviorAction | error | Call behavior actions may not call the activity that owns them. |

| CALLBEHAVIORSTATE | CallBehaviorAction | error | Call behaviors may not be used in state machines owned by blocks. Call operations (and use methods, if necessary, to further decompose operations). |

| CALLOPERATIONOPERATION | CallOperationAction | error | Call operation actions must have the called operation specified. |

| CHANGEEVENTEXPRESSION | Transition | error | All transitions triggered by change events must have CHANGE EXPRESSION defined. |

| CLASSPROHIBIT | Class | error | Unstereotyped classes are prohibited; use blocks, activities, or other elements instead. Classes in packages created by MagicDraw (such as CSV Import) are exempt. |

| COMMENTBODY | Comment | error | The body of comments, problems, and rationale may not be empty. |

| CONBLOCKDOCUMENTATION | Block [Class] | error | All blocks that type part properties of the system context must have documentation. |

| CONNECTIONPOINTCONNECTED | ConnectionPointReference | error | Connection points must have one transition (outgoing or incoming). |

| CONNECTOREND | Connector | error | Connector ends must be proxy ports. |

| CONSTRAINTBLOCKAPPLY | ConstraintBlock [Class] | error | Constraint blocks must own the constraints applied to them. |

| CONSTRAINTBLOCKDOC | ConstraintBlock [Class] | error | All constraint blocks must have documentation. |

| CONSTRAINTCOUNT | ConstraintBlock [Class] | error | Constraint blocks must own one (and only one) constraint. Separate multiple equations into multiple constraint blocks. |

| CONSTRAINTPARAM | ConstraintBlock [Class] | error | Constraint blocks must own one or more constraint parameters. |

| CONSTRAINTPARCONNECT | ConstraintParameter [Port] | error | If a constraint block is used to type a constraint property, its constraint parameters must be connected with binding connectors on a parametric diagram. |

| CONSTRAINTSPECIFICATION | Constraint | error | Constraint specifications may not be empty. |

| CONSTRAINTTYPE | ConstraintProperty [Property] | error | Constraint properties must be typed by constraint blocks. |

| CONTEXTPARTS | System context [Class] | error | System context blocks must own at least one part property. |

| CONTEXTREALIZATION | PartProperty [Property] | error | All part properties owned by logical system context blocks must realize one or more use case elements; those owned by a physical system context block must realize one or more part properties typed by logical blocks. |

| CONTEXTTYPE | PartProperty [Property] | error | Part properties may not be typed by system context blocks; context blocks should typically be the top-level block that owns the system context IBD at a given level of abstraction. |

| CONTROLNODEINCOMING | JoinNode

MergeNode |

error | Joins and merges must have at least two incoming flows. |

| CONTROLNODEOUTGOING | ForkNode

DecisionNode |

error | Forks and decisions must have at least two outgoing flows. |

| CONVEYTYPE | ItemFlow [InformationFlow] | error | Item flows may only convey signals. |

| CREATEOBJECTNAME | CreateObjectAction | error | Create Object actions must be named. |

| DATASTORETYPE | DataStoreNode

CentralBufferNode |

error | Data stores must be typed by signals or value types. |

| DECISIONNODENAME | DecisionNode | error | Decision nodes must have a name (this is used to specify the decision). |

| DESTROYOBJECTNAME | DestroyObjectAction | error | Destroy Object actions must be named. |

| DIAGRAMNAME | Diagram | error | Diagram names may not be blank. |

| ENTRYEXITNAME | Pseudostate | error | Entry and exit points for state machines must be named. |

| EXTENDEXTPOINT | Extend | error | Extend relationships must be assigned to at least one extension point. |

| EXTENSIONPOINTUSE | ExtensionPoint | error | Extension points must be associated with at least one Extend relationship. |

| EXTERNALPARTTYPE | PartProperty [Property] | error | Part properties typed by external blocks must be owned by system context or external blocks. |

| FLOWCONNECTOR | InformationFlow | error | This flow is not realized by any connectors. |

| FLOWDIRECTION | FlowProperty [Property] | error | All flow properties must be out or inout; this ensures consistent conjugation (all 1-way in flows are conjugated). |

| FLOWFINALINCOMING | FlowFinalNode | error | All flow final nodes must have one incoming flow. |

| FLOWLEVEL | Signal | error | This signal types flow properties in multiple levels of the architecture (create unique signals for logical and physical architectures to resolve). |

| FLOWSETENDS | flow set [InformationFlow] | error | If a flow set has individual flows assigned, the individual flows must connect the source and target of the flow set. |

| FLOWSETSOURCE | flow set [InformationFlow] | error | Ports that are the source of a flow set must have flow properties compatible with the conveyed signals of the flow set. |

| FLOWSETTARGET | flow set [InformationFlow] | error | Ports that are the target of a flow set must have flow properties compatible with the conveyed signals of the flow set. |

| FLOWTYPE | FlowProperty [Property] | error | All flow properties must be typed by signals. |

| GUARDSOURCE | ObjectFlow

ControlFlow |

error | Object Flows and Control Flows with guards must have decision nodes as their source. |

| IBDNEEDED | Block [Class] | info | Blocks that own part properties typed by blocks that own ports require IBDs to show their internal interfaces/connections/flows. |

| IBDOWNER | Diagram | error | IBDs must be owned by a block. |

| IBNOTSPECBLOCK | InterfaceBlock [Class] | error | Interface blocks may not specialize non-interface blocks. |

| IMPITEMFLOWCOMPAT | flow set [InformationFlow] | error | Flow properties of proxy ports connected by flow sets must be compatible. |

| INPINSCONN | InputPin | error | Input pins must have an incoming object flow (target pins are exempt from this rule). |

| INTBLOCKFLOW | InterfaceBlock [Class] | error | Interface blocks must own at least one flow property or port. |

| INTBLOCKLOOP | InterfaceBlock [Class] | error | This interface block is part of a loop (ports it owns are typed by interface blocks that lead to looping behavior and pin expansion ad infinitum). |

| INTERFACENEEDED | ObjectFlow | info | The owners of the ends of this object flow are different and it is not realized by an item flow. Object flows connecting pins/nodes typed by value types or owned by Read Self/Read Structural Feature actions are exempt from this rule; operations owned by activities are also exempt (since they are functional and not structural). |

| ITEMFLOWCONVEYED | ItemFlow [InformationFlow] | error | All item flows must convey one or more signals or be part of a flow set. |

| LIFELINETYPE | Lifeline | error | All lifelines must be typed by blocks. |

| LOGICALARCH | PartProperty [Property] | error | Part properties that are owned by a block with a logical stereotype must be typed by a block with a logical stereotype. |

| LOGICALCONNFLOWS | Connector | info | All connectors that connect ports in the logical architecture must have at least one flow. |

| LOGICALPHYSICAL | Block [Class] | error | Blocks cannot have both logical and physical stereotypes applied. |

| LOGICALPORT | ProxyPort [Port] | error | All proxy ports owned by blocks with the <<logical>> stereotype applied must be typed by interface blocks with the <<logical>> stereotype applied. |

| LOGTERMPARTS | logical [Class] | error | Logical blocks with ATOMIC = TRUE may not own part properties. |

| MESSAGEFLOWNEEDED | Message | info | This message signature is a signal and is not realized by any item flows or flow sets. |

| MESSAGEFLOWS | Message | error | If a message is associated with item flows or flow sets, they must convey its signature signal. |

| MESSAGESIGNATURE | Message | error | All messages on sequence diagrams must have signatures assigned (signal or operation). |

| METHODCALL | CallOperationAction | error | Call operations that are part of an operation’s method must call operations within the structure of the block that owns the method. |

| NOATTACHMENT | AttachedFile [Comment] | error | Embedding files in the model is not allowed. Use a hyperlink to an authoritative source instead (use an artifact if necessary to represent the embedded file). |

| OBJECTFLOWCOMPAT | ObjectFlow | error | If an object flow is realized by an item flow or flow set, those flows must convey the signal typing its source (exact match or its specific classifiers). |

| OBJECTFLOWENDS | ObjectFlow | error | Object flows must have input/output pins as their source/target (no direct connection with send or accept events). |

| OBJECTFLOWENDTYPE | ObjectFlow | error | The target of an object flow must be typed by the same elements as its source (or that element’s general classifier). Object flows that terminate in a flow final node are exempt. |

| OPAQUEACTIONBODY | OpaqueAction | error | The body of an opaque action may not be blank. |

| OPDOCUMENTATION | Operation | error | All operations must have documentation. |

| OPERATIONLOOP | Operation | error | This operation is part of a loop (a nested call operation within its method decomposition calls an operation upstream in the decomposition). |

| OPERATIONNAME | Operation | error | Operations must be named. |

| OPERATIONSTATE | Operation | info | This operation is owned by a block but not called by an activity related to a state machine. Operations may be called directly or within methods of operations that are called. Operations owned by externals are exempt. |

| OPOWNER | Operation | error | Operations must be owned by activities or blocks with context, logical, or physical stereotypes applied. |

| OPUSAGE | Operation | info | This operation is not used (called on an Activity or Sequence) in the model. Operations owned by externals are exempt. |

| OUTPINSCONN | OutputPin | error | Output pins must have an outgoing object flow |

| PACKAGENAME | Package | error | Packages must be named. |

| PARAMETERLEVEL | Parameter | error | The signal typing this parameter is used in both the logical and physical architectures. Create separate signal taxonomies for each. |

| PARAMETRICNEEDED | Block [Class] | error | Blocks that own constraint properties must have parametric diagrams. |

| PARATYPE | Parameter | error | All parameters owned by operations must be typed. |

| PARTIB | PartProperty [Property] | error | Part properties may not be typed by Interface blocks. |

| PARTLOOP | Block [Class] | error | There is a part property loop associated with this block (a block in the structure owns a part property typed by a block “upstream,” leading to recursion in the structure tree. |

| PARTLOOPGEN | Generalization | error | This generalization causes a part loop (the source block has an inherited part property typed by itself). |

| PARTTYPE | PartProperty [Property] | error | All part properties must be typed. |

| PERFORMANCEFUNCTIONREFINE | performanceRequirement [Class] | error | Performance requirements must refine one or more functional requirements. |

| PHYSICALARCH | PartProperty [Property] | error | Part properties that are owned by a block with a physical stereotype must be typed by a block with a physical stereotype. |

| PHYSICALPORT | ProxyPort [Port] | error | All proxy ports owned by blocks with the <<physical>> stereotype applied must be typed by interface blocks that have the <<physical>> stereotype applied. |

| PHYSTERMPARTS | physical [Class] | error | Physical blocks with ATOMIC = TRUE may not own part properties. |

| PROXYPORT | Port | error | All ports must be proxy ports. |

| PROXYPORTTYPE | ProxyPort [Port] | error | Proxy ports must be typed by interface blocks. |

| REALIZEDIRECTION | Realization | error | Realization relationships between logical and physical elements must have the physical element as the source and the logical element as the target. |

| RECEPTIONPROHIBIT | Reception | error | Receptions are prohibited; use operations instead. |

| REFPROPPROHIBIT | ReferenceProperty [Property] | error | Reference properties are prohibited. These may be represented as part properties at a higher level in the system model structure. |

| REGIONNAME | Region | error | Regions of orthogonal states must be named. |

| REQEXTEND | Requirement [Class] | error | Non-extended requirements are forbidden. |

| REQNAMETEXT | extendedRequirement [Class] | error | Requirements must have names and text entries. |

| REQTRACE | Requirement [Class] | error | Requirements must have at least one outgoing trace (to artifact), deriveReqt (found on requirements diagram), or refine relationship. |

| REQUIREMENTSATISFY | Requirement [Class] | info | This requirement does not have any satisfy relationships. (Requirements that have blank text are exempted from this rule). |

| REQUIREMENTVERIFY | Requirement [Class] | info | This requirement does not have at least one verify relationship. (Business requirements or requirements that have blank text are exempted from this rule). |

| SENDINCOMING | SendSignalAction | error | If incoming object flows to a Send Signal event are realized by an item flow or flow set, the signal of the event must be conveyed by the item flows or flow set. |

| SENDSIGNALMATCH | SendSignalAction | error | The signal sent by a send signal action must match the signal typing its input pin (same signal or one of its specific classifiers). |

| SENDSIGNALPIN | SendSignalAction | error | Send signal actions must have at least one input pin. |

| SENDSIGNALPORTMATCH | SendSignalAction | error | The assigned and inferred ports (via item flow realization) must match. |

| SEQUENCELEVEL | Interaction | error | Sequence diagrams may not mix physical and logical lifeline types. |

| SIGNALDOCUMENTATION | Signal | error | All signals must have documentation. |

| SIGNALEVENTSIGNAL | SignalEvent | error | Signal Events must have a signal defined. |

| SIGNALGEN | Signal | error | This signal has one or more general classifiers at different levels of abstraction (used in both logical and physical architectures). Use realization relationship to map between levels of abstraction instead. |

| SIGNALLOOP | Signal | error | This signal is part of a loop (its properties are typed by signals that own properties that are typed by it). |

| SIGNALNAME | Signal | error | All signals must be named. |

| SIGNALSOURCE | Signal | info | This signal is conveyed on an interface but it (or its general classifier) is not an output of any operations. Signals that flow out of external blocks or that type flow properties are exempt. |

| SOFTWAREFUNCTION | software [Class] | info | Software elements must own at least one operation or part property (typed by a software block). |

| SCRCNT | source content [Artifact] | error | All source content elements must have either a file name or hyperlink. |

| STATEDOCUMENTATION | State | error | All states must have documentation. |

| STATEINVARIANTMATCH | StateInvariant | error | The state of a state invariant must be owned by the block typing its lifeline. |

| STATEMACHINEOPERATIONS | Operation | error | State machines may not own operations in their structure (move this operation to a block or activity). |

| STATENAME | State | error | States must be named. |

| STATEOWNER | StateMachine | error | State machines must be owned by blocks or use cases. |

| STATEREACHABILITY | State | error | All states must have at least one incoming transition. |

| STEREOTYPEDOC | Stereotype | error | Stereotypes must be documented. |

| STMACHINENAME | StateMachine | error | State machine names may not be blank. |

| STMCLASSIFIERBEHAVIOR | Block [Class] | error | If a block owns a state machine, that state machine must be the block’s classifier behavior. |

| STMINTEGRITY | StateMachine | error | State machines may only call operations owned within their owning block’s structural decomposition (owned by blocks typing its parts). |

| SUBMACHINECONNECTIONS | State | error | States that are submachines must have all entry and exit points associated with connection points. |

| SUBMACHINESTR | State | error | Submachine states must reference state machines owned by blocks that type part properties within the owning block’s structure. |

| SWIMLANEPROHIBIT | ActivityPartition | error | Swimlanes are prohibited; see customizations for operations and flows that can display part-level ownership if operations are owned by blocks. Dynamic legends may also provide similar functionality to swimlanes in a more compact representation. |

| SYSTEMCONTEXT | Model | info | A model with structural elements should have at least one system context block (external elements and the system of interest should type part properties owned by the context block). |

| TAGDEFINITION | Property | error | Tag definitions must be documented (Visibility = private are exempt). |

| TIMEEVENTWHEN | Transition | error | All transitions triggered by time events must have WHEN defined. |

| TRANSITIONCHOICE | Transition | error | All transitions exiting a choice must have guards defined. |

| TRANSITIONSOURCE | Transition | error | No operation owns an output parameter typed by the signal (or its general classifier) that triggers this transition. |

| TRANSITIONTRIGGER | Transition | error | All transitions (except those exiting connection points or pseudostates) must have triggers. The trigger must also have an event specified. |

| TRANSITIONTRIGGERFLOW | Transition | info | This transition is triggered by a signal but is not associated with any item flows or flow sets. |

| TRIGGERFLOWMISMATCH | Transition | error | The signal triggering this transition is not conveyed on any related item flows or flow sets. |

| TRIGGERLEVEL | Trigger | error | This transition is triggered by a signal used in multiple levels of the architecture (create separate signal taxonomies for logical/physical architectures). |

| UCACTOR | UseCase | error | Use cases not connected to other use cases via extend/include/generalization relationships must be associated with at least one actor (or actor subtype). |

| UCASSOCIATION | Association | error | Use cases may not be associated with other use cases. |

| UCDOCUMENTATION | UseCase | error | All use cases must have documentation. |

| UCTRACE | UseCase | error | All use cases must have an outgoing trace, extend or refine relationship or an incoming include relationship |

2.2 Overview of Recent Systems Engineering Guidance Provided by the National Aeronautics and Space Administration (USA)

Editor’s Note: Excerpts from each of five NASA documents were assembled for PPI SyEN

Editor Ralph Young provides a concise summary of the contents of the SE guidance provided by NASA. The underlined text facilitates differentiating the contents of them.

- NASA/TP–20205003644, Engineering Elegant Systems: Theory of Systems Engineering (June 2020) 283 pages

“This Technical Publication describes a theoretical basis for systems engineering. A frame work for the theoretical basis of systems engineering is defined indicating systems engineering has two main focuses: system design and integration, and discipline integration. System engineering processes provide the organization of the system engineering efforts. A set of postulates, principles, and hypotheses are defined to guide the application of systems engineering processes and approaches. System design and integration includes the application of important concepts for systems design, analysis, modeling, and integration including system integrating physics, system state variables, engineering statistics, system value models, and multidisciplinary design optimization. Discipline integration incorporates aspects of sociology in managing the flow of information on the system through the development and operations organization(s). Decision-making structures and flows are discussed as well as cognitive science influences. Social forces influencing the system development and operation include policy and law are also addressed. Different modeling types for capturing discipline integration connections and information flows are identified.”

A very significant contribution provided in this document is NASA’s definition of and explanation of the properties of “complex systems”:

APPENDIX B—PROPERTIES OF COMPLEX SYSTEMS

One key issue that systems engineers must deal with is system complexity. While there are many definitions of complexity, the NASA Systems Engineering Consortium has considered the following definition for the design of large-scale systems: System complexity is defined as a measure of a system’s intricacy and comprehensibleness in interactions within itself and with its environment. This definition points to two factors in complexity: (1) Physical/logical intricacy and (2) human cognitive comprehension. Properties of complex systems are listed in Table 17[1]:

| Aggregation |

| Complex systems are aggregations of less complex systems. |

| Emergence |

| Complex systems have a propensity to exhibit unexpected performance of intended function.

Complex systems exhibit properties not present in the individual subsystems but present in the integration of subsystems (emergent property). |

| Interaction |

| Complex system interactions form networks within the system and with the system environments.

Complex system interactions can be understood through control theory. |

| Nonlinearity |

| Complex systems exhibit nonlinear responses to system stimuli. |

| Complex systems are difficult to predict. |

| Optimality |

| Complex systems have local optimums (organizational efficiency determines ability to

achieve local optimum. |

| Table 17 – Complex System Properties |

These properties illustrate several important characteristics of complex systems and the importance of engineering the system interactions. Aggregation is perhaps the most important property in terms of system design and analysis. This property indicates that complex systems can be split into smaller systems based on engineering discipline, system function, or both. Thus, the systems engineer can allocate the system design and system analysis by subsystem or function and then recombine the results for a complete system representation. Consideration of the recombination is essential to the systems engineer. The presence of the emergent properties indicates the system responses and interactions are not the sum of the parts. They include additional responses and functions not observed by considering individual subsystems, functions, or disciplines. Recombination and analysis must be conducted on the integrated system to evaluate all the complex system responses and functions. The recombination of functions typically results in nonlinear responses. Indeed, many system responses are nonlinear functions of the subsystem responses.

As stated in systems engineering postulate 5 (“Systems engineering influences and is influenced by budget, schedule, policy, and law” [page 15 of this document]), all systems have constraints. Global optimums are typically not a practical engineering result. Complex systems generally have local optimums. These optimums are complex functions of all the system responses and can be difficult to define. This property will be the basis of much research in systems engineering in the future.

The INCOSE Systems Engineering Complexity Primer provides an expansion on these concepts. The primer provides a good basic discussion on system complexity and the characteristics of complex systems. These characteristics have been further elevated using appreciative inquiry methods and applied to the assessment of complex systems. This paper provides improved insight and understanding of complex systems.

- NASA/TP–20205003646, Engineering Elegant Systems: The Practice of Systems Engineering (June 2020) 216 pages

This Technical Publication describes the practical application of the theoretical basis for systems engineering across the engineering lifecycle. Systems engineering is applied focused on both system design and integration, and discipline integration, through a set of principles. The systems engineering processes are discussed as organizing the engineering efforts. The application of the system modeling and analysis approaches across the lifecycle are discussed including system integrating physics, system state variables, engineering statistics, system value models, Human Systems Integration, and multidisciplinary design optimization. Discipline integration applies sociological principles, organizational management, decision making, cognitive science, and policy and law in the systems engineering context.

- NASA Systems Engineering Handbook, NASA/SP-2016-6105, Rev 2 (Rev2 supersedes SP-2007-6105 Rev 1 dated December, 2007) (Published in 2016) 297 pages

Since the initial writing of NASA/SP-6105 in 1995 and the following revision (Rev 1) in 2007, systems engineering as a discipline at the National Aeronautics and Space Administration (NASA) has undergone rapid and continued evolution. Changes include using Model-Based Systems Engineering to improve development and delivery of products, and accommodating updates to NASA Procedural Requirements (NPR) 7123.1. Lessons learned on systems engineering were documented in reports such as those by the NASA Integrated Action Team (NIAT), the Columbia Accident Investigation Board (CAIB), and the follow-on Diaz Report. Other lessons learned were garnered from the robotic missions such as Genesis and the Mars Reconnaissance Orbiter as well as from mishaps from ground operations and the commercial space flight industry. Out of these reports came the NASA Office of the Chief Engineer (OCE) initiative to improve the overall Agency systems engineering infrastructure and capability for the efficient and effective engineering of NASA systems, to produce quality products, and to achieve mission success. This handbook update is a part of that OCE-sponsored Agency-wide systems engineering initiative. In 1995, SP-6105 was initially published to bring the fundamental concepts and techniques of systems engineering to NASA personnel in a way that recognized the nature of NASA systems and the NASA environment. This revision (Rev 2) of SP-6105 maintains that original philosophy while updating the Agency’s systems engineering body of knowledge, providing guidance for insight into current best Agency practices, and maintaining the alignment of the handbook with the Agency’s systems engineering policy. The update of this handbook continues the methodology of the previous revision: a top-down compatibility with higher level Agency policy and a bottom-up infusion of guidance from the NASA practitioners in the field. This approach provides the opportunity to obtain best practices from across NASA and bridge the information to the established NASA systems engineering processes and to communicate principles of good practice as well as alternative approaches rather than specify a particular way to accomplish a task.

Footnotes: 1 Rechtin, Systems Architecting of Organizations: Why Eagles Can’t Swim. 2 Comments on systems engineering throughout Chapter 2.0 are extracted from the speech “System Engineering and the Two Cultures of Engineering” by Michael D. Griffin, NASA Administrator.

The result embodied in this handbook is a top-level implementation approach on the practice of systems engineering unique to NASA. Material used for updating this handbook has been drawn from many sources, including NPRs, Center systems engineering handbooks and processes, other Agency best practices, and external systems engineering textbooks and guides. This handbook consists of six chapters: (1) an introduction, (2) a systems engineering fundamentals discussion, (3) the NASA program/project life cycles, (4) systems engineering processes to get from a concept to a design, (5) systems engineering processes to get from a design to a final product, and (6) crosscutting management processes in systems engineering. The chapters are supplemented by appendices that provide outlines, examples, and further information to illustrate topics in the chapters. The handbook makes extensive use of boxes and figures to define, refine, illustrate, and extend concepts in the chapters. Finally, it should be noted that this handbook provides top-level guidance for good systems engineering practices; it is not intended in any way to be a directive. NASA/SP-2016-6105 Rev

This handbook should be used as a companion for implementing NPR 7123.1, Systems Engineering Processes and Requirements, as well as the Center specific handbooks and directives developed for implementing systems engineering at NASA. It provides a companion reference book for the various systems engineering-related training being offered under NASA’s auspices.

Comments on systems engineering throughout Chapter 2.0 are extracted from the speech “System Engineering and the Two Cultures of Engineering” by Michael D. Griffin, NASA Administrator.

- Expanded Guidance on Systems Engineering (Volume 1), at NASA Volume 1: Systems Engineering Practices (March 2016) 383 pages

This document is intended to provide general guidance and information on systems engineering that will be useful to the NASA community. It provides a generic description of Systems Engineering (SE) as it should be applied throughout NASA. A goal of the expanded guidance is to increase awareness and consistency across the Agency and advance the practice of SE. This guidance provides perspectives relevant to NASA and data particular to NASA. This expanded guidance should be used as a companion for implementing NPR 7123.1, Systems Engineering Processes and Requirements, the Rev 2 version of SP-6105, and the Center-specific handbooks and directives developed for implementing systems engineering at NASA. It provides a companion reference book for the various systems engineering-related training being offered under NASA’s auspices.

Authors

Hirshorn, Steven R. (NASA Headquarters Washington, DC United States)

Publication Date

March 1, 2016

Report/Patent Number

HQ-E-DAA-TN42999

NASA/SP-2016-6105/SUPPL/Vol 1

Distribution Limits

Public

Available Downloads:

Expanded Guidance for NASA Systems Engineering Volume 2

Related Records:

See Also NASA Systems Engineering Handbook

Preface:

Since the initial writing of NASA/SP-6105 in 1995 and the following revision (Rev 1) in 2007, systems engineering as a discipline at the National Aeronautics and Space Administration (NASA) has undergone rapid and continued evolution. Changes include implementing standards in the International Organization for Standardization (ISO) 9000; using Model-Based Systems Engineering to improve development and delivery of products; and accommodating updates to NASA Procedural Requirements (NPR) 7123.1. Lessons learned concerning systems engineering were documented in reports such as those by the NASA Integrated Action Team (NIAT), the Columbia Accident Investigation Board (CAIB), and the follow-on Diaz Report. Other lessons learned were garnered from the robotic missions such as Genesis and the Mars Reconnaissance Orbiter, as well as from mishaps from ground operations and the commercial spaceflight industry. Out of these reports came the NASA Office of the Chief Engineer (OCE) initiative to improve the overall Agency systems engineering infrastructure and capability for the efficient and effective engineering of NASA systems, to produce quality products, and to achieve mission success. In 1995, SP-6105 was initially published to bring the fundamental concepts and techniques of systems engineering to NASA personnel in a way that recognized the nature of NASA systems and the NASA environment. In 2007, Rev 1 of the handbook was finalized and distributed. While updating the 2007 rev 1 version of the NASA Systems Engineering Handbook for a new Rev 2 version, authors from across the Agency submitted a wealth of information that not only expanded on the content of the earlier version but also added entire new sections. This body of knowledge has been captured in this document as “Expanded Guidance for NASA System Engineering,” presented in a two-volume set. The over 700 pages of information is considered a relevant reference to the larger NASA Systems Engineering community of practitioners. The official second revision of the NASA Systems Engineering Handbook filters some of this information that is ancillary to implementing NPR 7123.1 for the purpose of condensing the information into a more manageable version useable as a handbook. The official revised NASA Systems Engineering Handbook is a more focused “core” version of the information in this expanded guidance document. This expanded guidance continues the methodology of the SE Handbook: a top-down compatibility with higher level Agency policy and a bottom-up infusion of guidance from the NASA practitioners in the field. This approach provides the opportunity to obtain best practices from across NASA and bridge the information to the established NASA systems engineering processes and to communicate principles of good practice as well as alternative approaches rather than specify a particular way to accomplish a task. The result embodied in this handbook is a top-level implementation approach on the practice of systems engineering unique to NASA. Material used for updating this handbook has been drawn from many sources, including NPRs, Center systems engineering handbooks and processes, other Agency best practices, and external systems engineering textbooks and guides. This expanded guidance consists of eight chapters: (1) an introduction, (2) a systems engineering fundamentals discussion, (3) the NASA program/project life cycles, (4) systems engineering processes to get from a concept to a design, (5) systems engineering processes to get from a design to a final product, (6) crosscutting management processes in systems engineering, (7) NASA/SP-2016-6105-SUPPL Expanded Guidance for NASA Systems Engineering xiii crosscutting topics, and (8) special topics related to systems engineering that are not yet considered established best practices within the Agency but are included as reference and source material for practitioners. The chapters are supplemented by appendices that provide outlines, examples, and further information to illustrate topics in the chapters. This expanded guidance makes extensive use of boxes and figures to define, refine, illustrate, and extend concepts in the chapters. Finally, it should be noted that this document provides top-level guidance for good systems engineering practices; it is not intended in any way to be a directive.

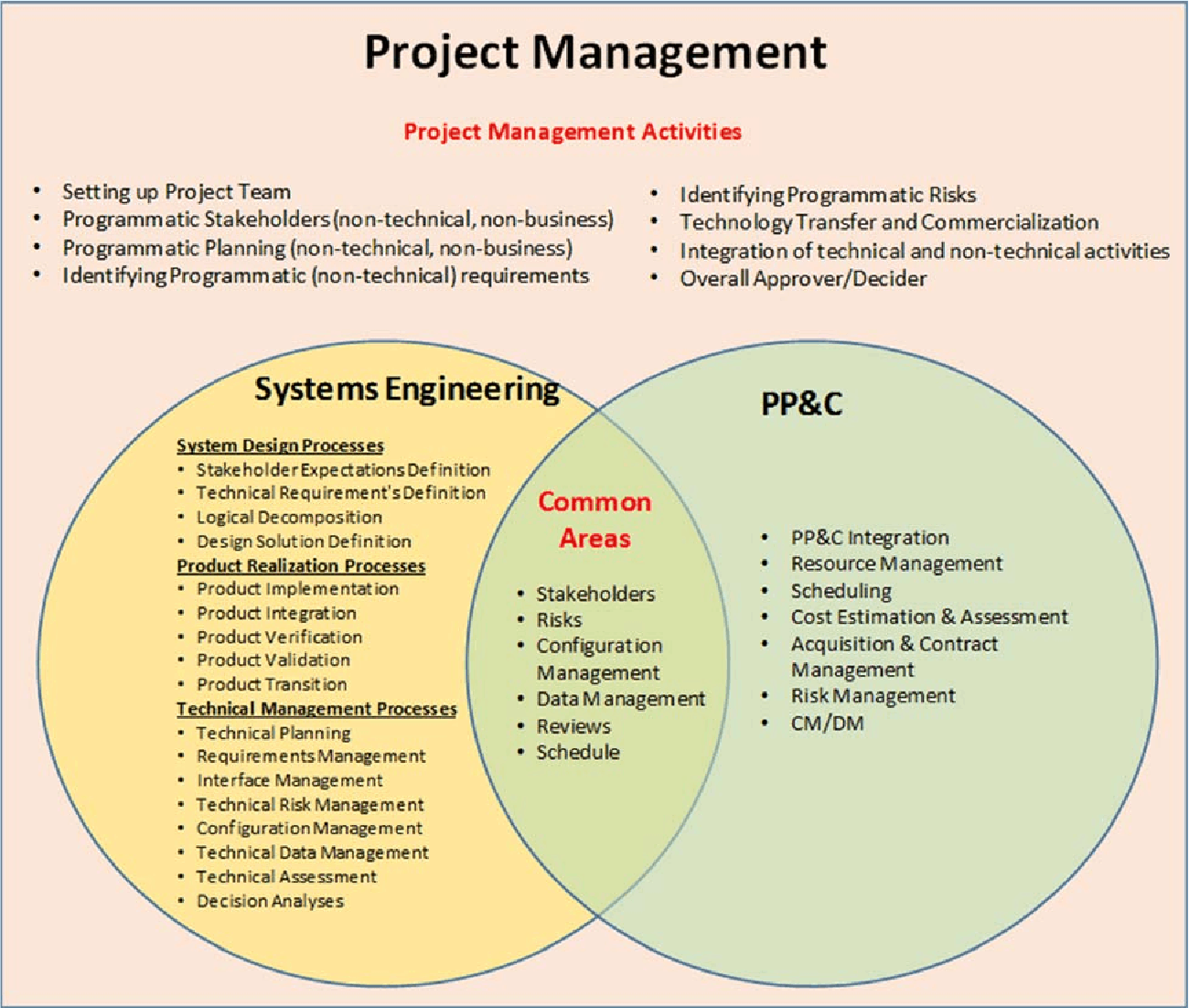

2.0 Fundamentals of Systems Engineering

At NASA, “systems engineering” is defined as a methodical, multi-disciplinary approach for the design, realization, technical management, operations, and retirement of a system. A “system” is the combination of elements that function together to produce the capability required to meet a need. The elements include all hardware, software, equipment, facilities, personnel, processes, and procedures needed for this purpose; that is, all things required to produce system-level results. The results include system-level qualities, properties, characteristics, functions, behavior, and performance. The value added by the system as a whole, beyond that contributed independently by the parts, is primarily created by the relationship among the parts; that is, how they are interconnected.1 It is a way of looking at the “big picture” when making technical decisions. It is a way of achieving stakeholder functional, physical, and operational performance requirements in the intended use environment over the planned life of the system within cost, schedule, and other constraints. It is a methodology that supports the containment of the life cycle cost of a system. In other words, systems engineering is a logical way of thinking. Systems engineering is the art and science of developing an operable system capable of meeting requirements within often opposed constraints. Systems engineering is a holistic, integrative discipline, wherein the contributions of structural engineers, electrical engineers, mechanism designers, power engineers, human factors engineers, and many more disciplines are evaluated and balanced, one against another, to produce a coherent whole that is not dominated by the perspective of a single discipline.2 Systems engineering seeks a safe and balanced design in the face of opposing interests and multiple, sometimes conflicting constraints. The systems engineer should develop the skill for identifying and focusing efforts on assessments to optimize the overall design and not favor one system/subsystem at the expense of another while constantly validating that the goals of the operational system will be met. The art is in knowing when and where to probe. Personnel with these skills are usually tagged as “systems engineers.” They may have other titles—lead systems engineer, technical manager, chief engineer— but for this document, the term systems engineer is used. The exact role and responsibility of the systems engineer may change from project to project depending on the size and complexity of the project and from phase to phase of the life cycle. For large projects, there may be one or more systems engineers. For small projects, the project manager may sometimes perform these practices. But whoever assumes those responsibilities, the systems engineering functions should be performed. The actual assignment of the roles and responsibilities of the named systems engineer may also therefore vary. The lead systems engineer ensures that the system technically fulfills the defined needs and requirements and that a proper systems engineering approach is being followed. The systems engineer oversees the project’s systems engineering activities as performed by the technical team and directs, communicates, monitors, and coordinates tasks. The systems engineer reviews and evaluates the technical aspects of the project to ensure that the systems/subsystems engineering processes are functioning properly and evolves the system from concept to product. The entire technical team is involved in the systems engineering process. The systems engineer usually plays the key role in leading the development of the concept of operations (ConOps) and resulting system architecture, defining boundaries, defining and allocating requirements, evaluating design tradeoffs, balancing technical risk between systems, defining and assessing interfaces, and providing oversight of verification and validation activities, as well as many other tasks. The systems engineer typically leads the technical planning effort and has the prime responsibility in documenting many of the technical plans, including the Systems Engineering Management Plan (SEMP), ConOps, Human Systems Integration (HSI) Plan, requirements and specification documents, verification and validation documents, certification packages, and other technical documentation. In summary, the systems engineer is skilled in the art and science of balancing organizational, cost, and technical interactions in complex systems. The systems engineer and supporting organization are vital to supporting program and Project Planning and Control (PP&C) with accurate and timely cost and schedule information for the technical activities. Systems engineering is about tradeoffs and compromises; it uses a broad crosscutting view of the system rather than a single discipline view. Systems engineering is about looking at the “big picture” and not only ensuring that they get the design right (meet requirements) but that they also get the right design (enable operational goals and meet stakeholder expectations). Systems engineering plays a key role in the project organization. Managing a project consists of three main objectives: managing the technical aspects of the project, managing the project team, and managing the cost and schedule. As shown in Figure 2.0-1, these three functions are interrelated. Systems engineering is tightly related to the technical aspects of program and project management. As discussed in NPR 7120.5, NASA Space Flight Program and Project Management Requirements, project management is the function of planning, overseeing, and directing the numerous activities required to achieve the requirements, goals, and objectives of the customer and other stakeholders within specified cost, quality, and schedule constraints. Similarly, NPR 7120.8, NASA Research and Technology Program and Project Management Requirements, states that the program or project lead (i.e., management) is responsible for the formulation and implementation of the R&T program or project and NPR 7120.7, NASA Information Technology and Institutional Infrastructure Program and Project Management Requirements, refers project managers to NPR 7123.1, NASA Systems Engineering Processes and Requirements, for systems engineering requirements. Systems engineering is focused on the technical characteristics of decisions including technical, cost, and schedule and on providing these to the project manager. The project manager is responsible for ensuring that the project delivers the system within cost and schedule. The overlap in these responsibilities is natural, with the systems engineer focused on the success of the engineering of the system (technical, cost, schedule) and the project manager providing constraints on engineering options to maintain a successful delivery of the system within cost and schedule. These areas are systems engineering and project control. Figure 2.0-1 is a notional graphic depicting this concept. Note that there are areas where the two cornerstones of project management, SE and PP&C, overlap. In these areas, NASA/SP-2016-6105-SUPPL Expanded Guidance for NASA Systems Engineering 4 SE provides the technical aspects or inputs; whereas PP&C provides the programmatic, cost, and schedule inputs. This document focuses on the SE side of the diagram. The practices/processes are taken from NPR 7123.1, NASA Systems Engineering Processes and Requirements. Each process is described in much greater detail in subsequent chapters of this document, but an overview is given in the following subsections of this chapter. Figure 2.0-1 provides a graphic describing SE in Context of Overall Project Management. A NASA systems engineer can participate in the NASA Engineering Network (NEN) Systems Engineering Community of Practice, located at https://www.nasa.gov/content/nasa-engineering-network-communities-of-practice/index.html. This Web site includes many resources useful to systems engineers, including document templates for many of the work products and milestone review presentations required by the NASA SE process.

Figure 2.0-1 SE in Context of Overall Project Management

- Expanded Guidance on Systems Engineering (Volume 2) at NASA, Volume 2: Crosscutting Topics, Special Topics, and Appendices (March 2016) 272 pages

Historically, most successful NASA projects have depended on effectively blending project management, systems engineering, and technical expertise among NASA, contractors, and third parties. Underlying these successes are a variety of agreements (e.g., contract, memorandum of understanding, grant, cooperative agreement) between NASA organizations or between NASA and other Government agencies, Government organizations, companies, universities, research laboratories, and so on. To simplify the discussions, the term “contract” is used to encompass these agreements. This section focuses on the NASA systems engineering activities pertinent to awarding a contract, managing contract performance, and completing a contract. In particular, NASA systems engineering interfaces to the procurement process are covered, since the NASA engineering technical team plays a key role in the development and evaluation of contract documentation. Contractors and third parties perform activities that supplement (or substitute for) the NASA project technical team accomplishment of the NASA common systems engineering technical process activities and requirements outlined in this guide. Since contractors might be involved in any part of the systems engineering life cycle, the NASA project technical team needs to know how to prepare for, allocate or perform, and implement surveillance of technical activities that are allocated to contractors.

Authors

Hirshorn, Steven R. (NASA Headquarters Washington, DC United States)

Publication Date

March 16, 2017

Report/Patent Number

NASA/SP-2016-6105/SUPPL/Vol 2

HQ-E-DAA-TN42999

Distribution Limits

Public

Available Downloads

Related Records

See Also NASA Systems Engineering Handbook

See Also Expanded Guidance for NASA Systems Engineering. volume 1: Systems Engineering Practices

6.0 Crosscutting Topics

The topics in this chapter cut across all life-cycle phases and are of special interest for enhancing the performance of the systems engineering process or constitute special considerations in the performance of systems engineering. These topics include the following:

Engineering with contracts: applying systems engineering principles to contracting and contractors;

Concurrent engineering methods: diverse specialists systematically collaborating simultaneously in a shared environment, real or virtual, to yield an integrated design;

Selecting engineering design tools: integrated design facilities and tools;

Environmental, nuclear safety, and planetary protection policy compliance: protecting the environment and discussing the importance of the Nation’s space assets;

Use of the metric system;

Systems engineering on multi-level/multi-phase programs and projects: special considerations;

Fault management: understanding and managing the off-nominal system behaviors;

Technical margins: establishing and managing for contingencies to reduce development risk and increase the chance for mission success; and

Human systems integration: balancing total system safety and effectiveness to ensure mission success.

6.1.2 Acquisition Strategy

While this section pertains to projects where the decision has already been made to have a contractor implement a portion of the project, it is important to remember that the choice between “making” a product in-house by NASA and “buying” it from a contractor is one of the most crucial decisions in systems development.

7.0 Special Topics

The articles in this chapter represent topics that are of special interest, may be relatively new to the Agency or may be new methods that can provide benefit. These topics represent useful approaches to system engineering and the sections below provide information on the application of statistical engineering and Model-Based System Engineering (MBSE) on programs, projects, or activities. As these topics are still emerging in their forms and applications within the Agency, there exists flexibility in how to apply these methods to a particular program, project, or activity. In today’s computer-based, data-rich world, the systems engineer needs to deal with statistical information and model-based engineering approaches employed by various engineering disciplines. The extent to which statistical engineering and MBSE are applied depends on the judgment of the systems engineer about the benefits to technical, schedule, and cost performance that these approaches provide. The systems engineer should also consider the organizational effects of applying these methods including efficiency and the organization’s cultural acceptance.

“MBSE is part of a long-term trend toward model-centric approaches adopted by other engineering disciplines, including mechanical, electrical and software. In particular, MBSE is expected to replace the document-centric approach that has been practiced by systems engineers in the past and to influence the future practice of systems engineering by being fully integrated into the definition of systems engineering processes.” (Source: INCOSE 2007)

7.2.7 MBSE Benefits

Model-based systems engineering will enable the opportunity for overall better quality, lower cost, and lower risk for several reasons. These benefits come about because:

There can be greater consistency of all products because any single piece of design information can be expressed authoritatively in a single place that can later be referred to by others for decisions, derivations, or formation of artifacts.

There can be better visibility into the salient characteristics of a system because multiple views can be created that succinctly address specific stakeholder concerns.

There can be greater congruence between documentation and reality: Model-based artifacts can be generated automatically, lowering the effort to keep them up to date with the result that artifacts can always match the best available information.

Navigation, traceability, and interrogation of information are facilitated in the model-based approach. People can have access to the information they are authorized to have more quickly and on an as-needed basis without going through manual distribution or search processes.

Models used for verification can have higher quality, and provide greater confidence if design and manufacturing models are applied diligently before and after use of the verification models.

Models themselves can help to reveal hidden flaws of the models.

There can be less investment lost in erroneous design because sometimes the model reveals a flaw as soon as it is created, enabling correction before downstream work is done, work that would be invalid if the upstream mistake were not corrected immediately.

Having fewer inconsistencies between artifacts lowers the costs for verification.

It provides identification, management, interoperability, and integration of information across business or organizational elements needed to support program PDLM goals.

It ensures that data needed by programs and projects (e.g., for milestones, reviews, mission operations, and anomalies or investigations, decisions, and outcomes) are identified and managed to provide traceability of the data used in decision-making.

7.3 Concept Maturity Levels

Concept Maturity Levels (CMLs) were introduced to provide mission architects and systems engineers with a way to measure and communicate the fidelity and accuracy of a mission concept during the early stages of its life cycle. The CMLs represent a scale that provides a repeatable way to assess and describe the maturity of concepts and a single numerical scale, comparable to the TRL scale, to assess the maturity of different mission concepts. Mission concept development teams use this method and associated tools throughout the pre-project study phase and on through the Formulation phases (Phase A/B). Prior to the advent of the CML scale, there were no standardized methods available to (1) determine how much work was placed into a mission concept; (2) explicitly know when in a pre-project’s life-cycle trade space exploration would be most advantageous to ensuring that a mission concept was the most scientifically relevant and cost-effective; (3) determine which concepts had the same level of work and could be compared on the same terms; and (4) how much work a mission concept required to achieve a subsequent level of maturity.

Appendix A: Acronyms

Appendix B: Glossary

Appendix C: How to Write a Good Requirement – Checklist

Appendix D: Requirements Verification Matrix

Appendix E: Creating the Validation Plan with a Validation Requirements Matrix

Appendix F: Functional, Timing, and State Analysis

Appendix G: Technology Assessment / Insertion

Appendix H: Integration Plan Outline

Appendix I: Verification and Validation Plan Outline

Appendix J: SEMP Content Outline

Appendix K: Technical Plans

Appendix L: Interface Requirements Document Outline

Appendix M: CM Plan Outline

Appendix N: Guidance on Technical Peer Reviews/Inspections

Appendix O: Reserved

Appendix P: SOW Review Checklist

Appendix Q: Reserved

Appendix R: HSI Plan Content Outline

Appendix S: Concept of Operations Annotated Outline

Appendix T: Systems Engineering in Phase E (Operations and Sustainment)

T.1 Overview.

In general, normal Phase E activities reflect a reduced emphasis on system design processes but a continued focus on product realization and technical management. Product realization process execution in Phase E takes the form of continued mission plan generation (and update), response to changing flight conditions (and occurrence of in-flight anomalies), and update of mission operations techniques, procedures, and guidelines based on operational experience gained. Technical management processes ensure that appropriate rigor and risk management practices are applied in the execution of the product realization processes. Successful Phase E execution requires the prior establishment of mission operations capabilities in four (4) distinct categories: tools, processes, products, and trained personnel. These capabilities may be developed as separate entities, but need to be fused together in Phase E to form an end-to-end operational capability. Although systems engineering activities and processes are constrained throughout the entire project life cycle, additional pressures exist in Phase E:

Increased resource constraints – Even when additional funding or staffing can be secured, building new capabilities or training new personnel may require more time or effort than is available. Project budget and staffing profiles generally decrease at or before entry into Phase E, and the remaining personnel are typically focused on mission execution.

Unforgiving schedule – Unlike pre-flight test activities, it may be difficult or even impossible to pause mission execution to deal with technical issues of a spacecraft in operation. It is typically difficult or impossible to truly pause mission execution after launch. These factors must be addressed when considering activities that introduce change and risk during Phase E.

3.1 Leading the Transformation of Model-based Engineering

by

Al Hoheb

Enterprise Transformation Leader

The Aerospace Corporation

A Federally Funded Research Corporation (FFRC)

(Notes provided by Ralph Young, Editor, PPI SyEN)

Al Hoheb presented an INCOSE Tutorial (Webinar) on August 19, 2020, “Leading the Transformation of Model-based Engineering”. Al is the author of the INCOSE documentation, INCOSE Model-based Capabilities Matrix and Users Guide (available at the INCOSE Website – see below).

Following are some of the key points that were emphasized in this briefing.

- It’s important for the leader to discern that the purpose and objectives of the organization’s modeling efforts are derived from strategic plans. Engaging internal and external stakeholders to agree on modeling objectives in itself is a useful document that is typically incorporated into a Digital Engineering/Model Implementation Plan or CONOPS encompassing the life cycle and characterizes the stages of modeling capability development.

- The model-based implementation approach involves:

- Determining the vision and goals of the modeling effort.

- Determining the enterprise modeling objectives based on the organizational goals, objectives, and strategy.

- Defining the modeling objectives to target the needed modeling capabilities.

- Defining the modeling elements and data necessary to meet modeling objectives.